Summary

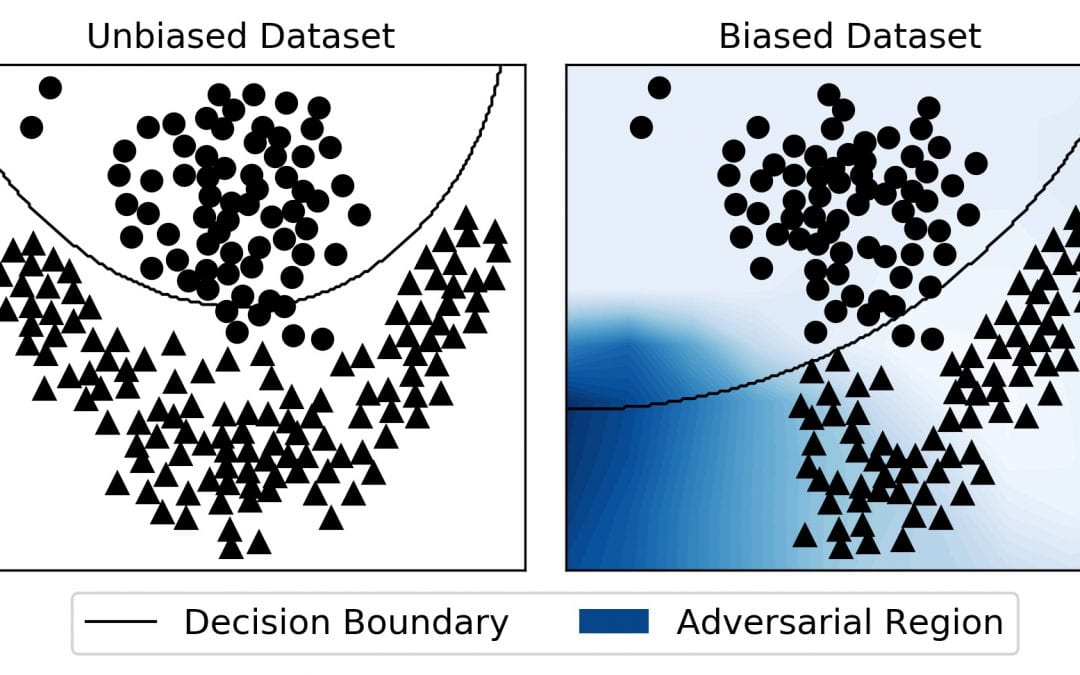

We aim to design and develop new methods to attack machine learning models and identify the lack of reliability, for example related to bias in the data and model. These issues can cause problems in various applications, caused by weak performances of models in cases certain groups are underrepresented in the data. This leads to misclassification and unfairness of the model. We will develop a framework that identifies regions in the data space that are prone to make models fail. The framework will not only identify these regions and data, but also produce tools to improve it, and return a score that reflects the reliability of the model. This score can be used to certify models without having access to the training process. Under-representation of minorities in the training process of models is a common problem in New Zealand and approaches targeting this have a wide range of applications, for example in welfare or medicine.

Duration and Type

- 12 week summer scholarship

Requirements

- Programming in Python

Supervisor and contact

- Joerg Wicker

- Send CV and transcript by mail to Joerg Wicker